Search

Search

Artificial intelligence is reshaping the threat landscape facing U.S. federal agencies. Malicious actors are increasingly leveraging AI to scale reconnaissance, impersonation, and credential theft. These tactics compromise traditional identity assurance mechanisms and reveal structural weaknesses in identity systems and capabilities.

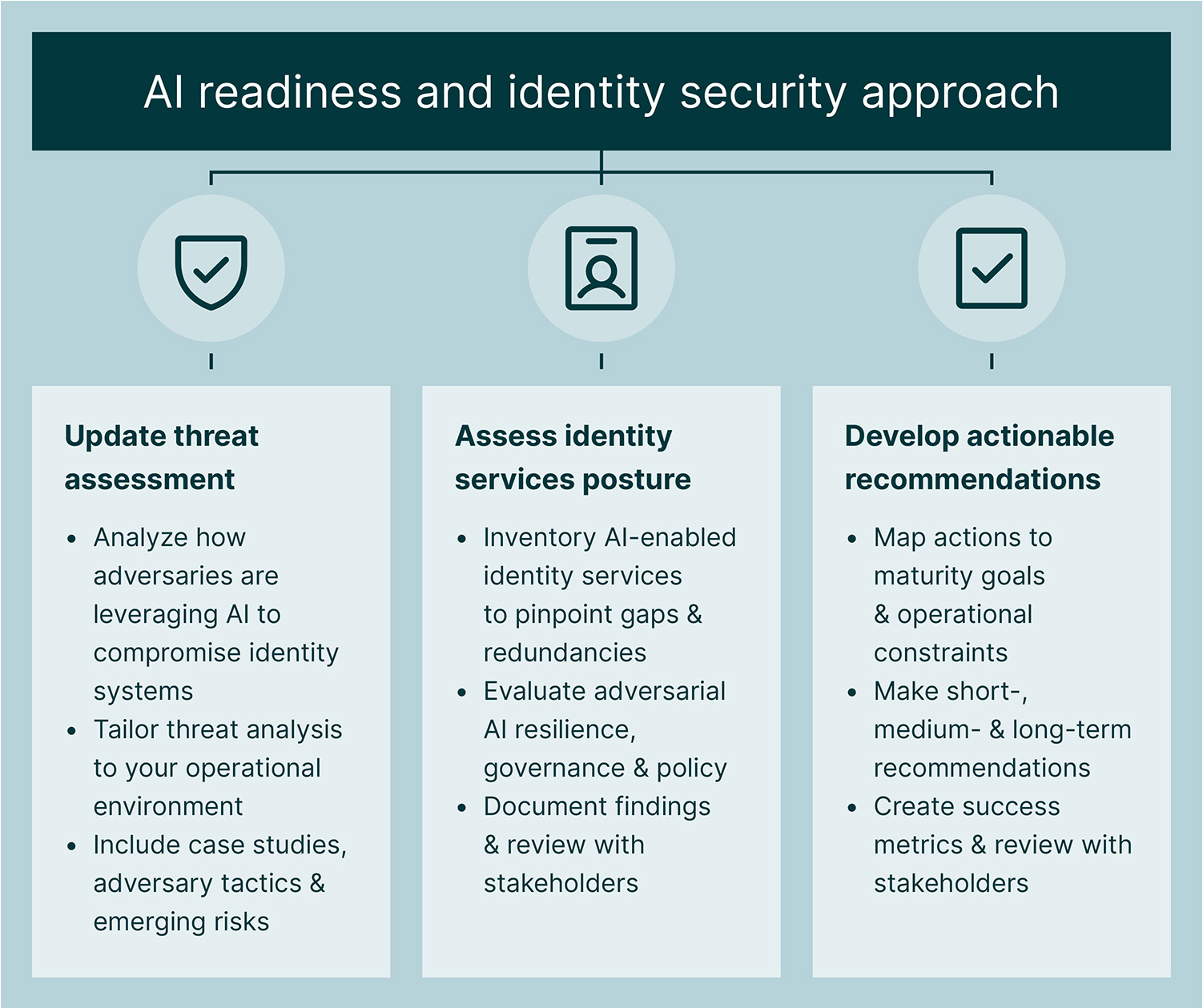

Adversarial AI threatens the foundations of many identity systems by attacking the people, practices, processes, and operations meant to keep us safe. Based on Guidehouse AI readiness research and our work supporting federal agencies and commercial organizations, our experts have identified a number of insights into today’s threat and risk environment. We recommend that federal agencies respond to these challenges by:

The prevalence of AI tools is democratizing cyberthreats and accelerating simply because of the lower barriers to entry. AI tools are easy to use, enabling threat actors to rely on operatives with little training to deploy them and to scale rapidly at low cost. Deep technical knowledge and specialized expertise are not prerequisites for launching sophisticated attacks.

Some of the elements undermining traditional identity systems and controls and introducing new risks across the cyber kill chain include:

Enhanced autonomous reconnaissance and targeting tools. Adversaries use AI to develop autonomous reconnaissance and targeting (ART) systems, which combine machine learning, natural language processing, and behavioral analytics to craft platform-specific attacks. ART systems can analyze vast networks in seconds to build product profiles, uncover potential targets, probe defenses, and generate product-specific exploit code. Continuously refining their strategies using deep learning, these systems execute attacks at machine speed, exploiting vulnerabilities faster than manual detection or traditional automated scans can react and adapt to in real time.

These systems compromise identity by automating reconnaissance to extract credential patterns, impersonate users, and bypass authentication protocols. As a result, AI-driven attacks are becoming more resilient and persistent—and they can evolve faster than current defensive measures can keep up without direct human input.

Deepfake-enabled impersonation. Generative AI tools allow attackers to clone voices and produce convincing video deepfakes with minimal input data. Such attacks exploit trust-based workflows and challenge biometric verification systems within identity workflows.

AI-powered social engineering. AI-automated phishing campaigns leverage public data to generate user personas and personalized messages, allowing bad actors to mimic writing styles and eliminate traditional fraud indicators much faster and more accurately than traditional approaches. These campaigns adjust tactics in real time based on user behavior, exploiting cognitive biases and authority cues. Nation-state actors have used generative AI to fabricate identities, rehearse culturally fluent interviews, and infiltrate U.S. organizations as remote workers—gaining privileged access and conducting operations from within.

Attacks on AI systems. AI systems themselves are vulnerable. Prompt injection attacks manipulate model behavior by crafting inputs that override system instructions. Training data poisoning can introduce bias, embed backdoors, and degrade model performance. These attacks compromise the integrity of AI-assisted identity verification workflows and enable adversaries to manipulate authentication decisions and exfiltrate sensitive identity data.

AI-driven decision pipelines used in agentic AI are also susceptible to compromise, where attackers target not only the model but the surrounding infrastructure. Risks include model inversion, membership inference, and data leakage, which challenge traditional concepts of anonymization and consent and can compromise identity assurance. Because these vulnerabilities are latent, emergent, and context-dependent, they require robust governance and secure integration.

Post-quantum risks. As quantum computing advances, it could undermine the encryption algorithms that form the backbone of identity services and potentially pose new types of cyberthreats to the public-key algorithms underpinning identity services. Adversaries may exploit “harvest now, decrypt later” strategies to retroactively access previously stolen encrypted identity data. Risks include forged authentication tokens, quantum-enabled cloning of smart cards, and interoperability disruptions. To stay secure in the quantum era, organizations need to adopt new encryption standards designed to resist quantum attacks and build systems that can quickly adapt to future cryptographic changes.

With expert guidance, your agency can tackle operational and adversarial challenges by operationalizing an AI readiness assessment, starting with your identity capabilities. This includes understanding the current threat landscape, evaluating your identity capabilities and their AI features, and developing short-, medium-, and long-term roadmaps to counter urgent threats.

In our approach, we design short-, medium-, and longer-term recommendations that mitigate key operational risks, integrate measurable success indicators, and engage stakeholders to drive proper implementation. Counteracting machine-speed attacks and social engineering where traditional MFA is ineffective requires a shift to “fighting AI with AI.” Short-term recommendations emphasize rapid visibility, identity governance, and the deployment of phishing-resistant authentication (FIDO2) and AI-driven anomaly detection capable of matching adversarial swarms. Medium-term priorities include behavioral biometrics and supply chain assurance, while long-term goals focus on strategic resilience, cryptographic modernization, and global interoperability.

As identity systems increasingly become targets for scalable deception and algorithmic compromise, it’s crucial to strengthen yours and prepare for AI-enhanced risks through a thorough evaluation and remediation plan. By evolving identity protections alongside adversarial techniques, you can establish a more coherent, durable, adaptive foundation for identity security.

Guidehouse is a global AI-led professional services firm delivering advisory, technology, and managed services to the commercial and government sectors. With an integrated business technology approach, Guidehouse drives efficiency and resilience in the healthcare, financial services, energy, infrastructure, and national security markets.